Emuru Convolutional VAE

This repository hosts the Emuru Convolutional VAE, described in our paper. The model features a convolutional encoder and decoder, each with four layers. The output channels for these layers are 32, 64, 128, and 256, respectively. The encoder downsamples an input RGB image (with three channels and dimensions width and height) to a latent representation with a single channel and spatial dimensions that are one-eighth of the original height and width. This design compresses the style information in the image, allowing a lightweight Transformer Decoder to efficiently process the latent features.

Training Details

- Hardware: NVIDIA RTX 4090

- Iterations: 60,000

- Optimizer: AdamW with a learning rate of 0.0001

- Loss Components:

- MAE Loss: weight of 1

- WID Loss: weight of 0.005

- HTR Loss: weight of 0.3 (using noisy teacher-forcing with a probability of 0.3)

- KL Loss: with a beta parameter set to 1e-6

Auxiliary Networks

- Writer Identification: A ResNet with 6 blocks, trained until achieving 60% accuracy on a synthetic dataset.

- Handwritten Text Recognition (HTR): A Transformer Encoder-Decoder trained until reaching a Character Error Rate (CER) of 0.25 on the synthetic dataset.

Usage

You can load the pre-trained Emuru VAE using Diffusers’ AutoencoderKL interface with a single line of code:

from diffusers import AutoencoderKL

model = AutoencoderKL.from_pretrained("blowing-up-groundhogs/emuru_vae")

Below is an example code snippet that demonstrates how to load an image directly from a URL, process it, encode it into the latent space, decode it back to image space, and save the reconstructed image.

Code Example

import torch

from PIL import Image

from diffusers import AutoencoderKL

from huggingface_hub import hf_hub_download

from torchvision.transforms.functional import to_tensor, to_pil_image

# Load the pre-trained Emuru VAE from Hugging Face Hub.

model = AutoencoderKL.from_pretrained("blowing-up-groundhogs/emuru_vae")

# Function to preprocess an RGB image:

# Loads the image, converts it to RGB, and transforms it to a tensor normalized to [0, 1].

def preprocess_image(image_path):

image = Image.open(image_path).convert("RGB")

image_tensor = to_tensor(image).unsqueeze(0) # Add batch dimension

return image_tensor

# Function to postprocess a tensor back to a PIL image for visualization:

# Clamps the tensor to [0, 1] and converts it to a PIL image.

def postprocess_tensor(tensor):

tensor = torch.clamp(tensor, 0, 1).squeeze(0) # Remove batch dimension

return to_pil_image(tensor)

# Example: Encode and decode an image.

# Replace the following line with your image path.

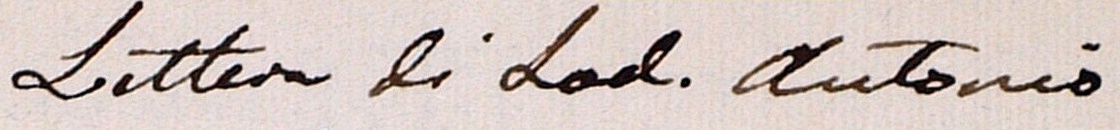

image_path = hf_hub_download(repo_id="blowing-up-groundhogs/emuru_vae", filename="samples/lam_sample.jpg")

input_image = preprocess_image(image_path)

# Encode the image to the latent space.

# The encode() method returns an object with a 'latent_dist' attribute.

# We sample from this distribution to obtain the latent representation.

with torch.no_grad():

latent_dist = model.encode(input_image).latent_dist

latents = latent_dist.sample()

# Decode the latent representation back to image space.

with torch.no_grad():

reconstructed = model.decode(latents).sample

# Load the original image for comparison.

original_image = Image.open(image_path).convert("RGB")

# Convert the reconstructed tensor back to a PIL image.

reconstructed_image = postprocess_tensor(reconstructed)

# Save the reconstructed image.

reconstructed_image.save("reconstructed_image.png")

- Downloads last month

- 6