metadata

license: mit

datasets:

- TIGER-Lab/AceCode-89K

language:

- en

base_model:

- Qwen/Qwen2.5-Coder-7B-Instruct

tags:

- acecoder

- code

- Qwen

🂡 AceCode-89K

Paper | Github | AceCode-89K | AceCodePair-300K | RM/RL Models

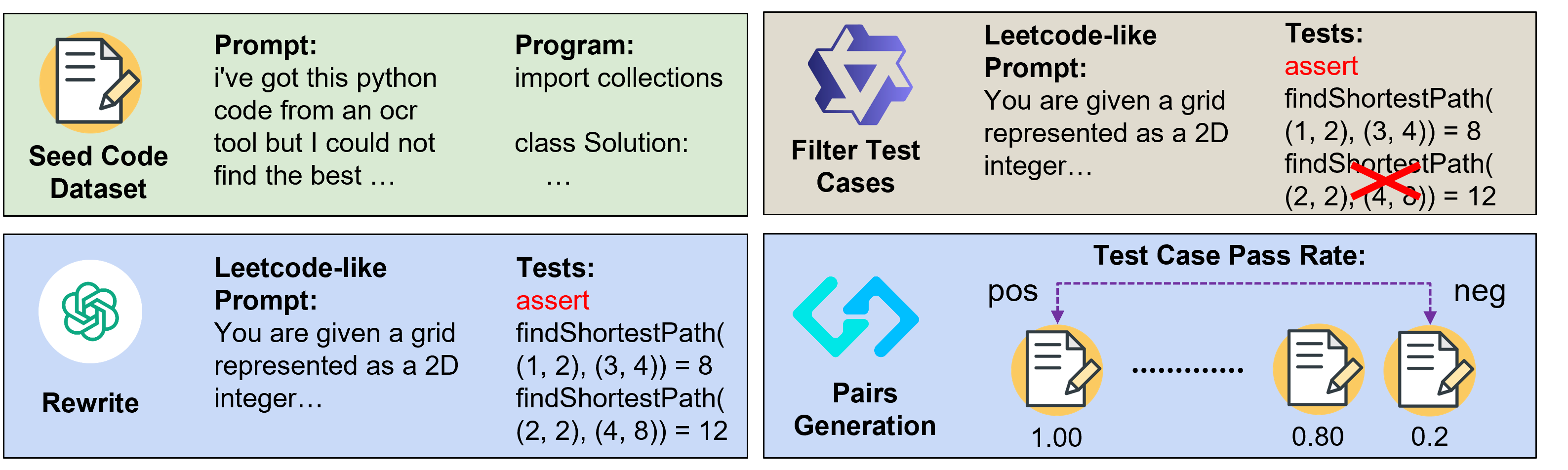

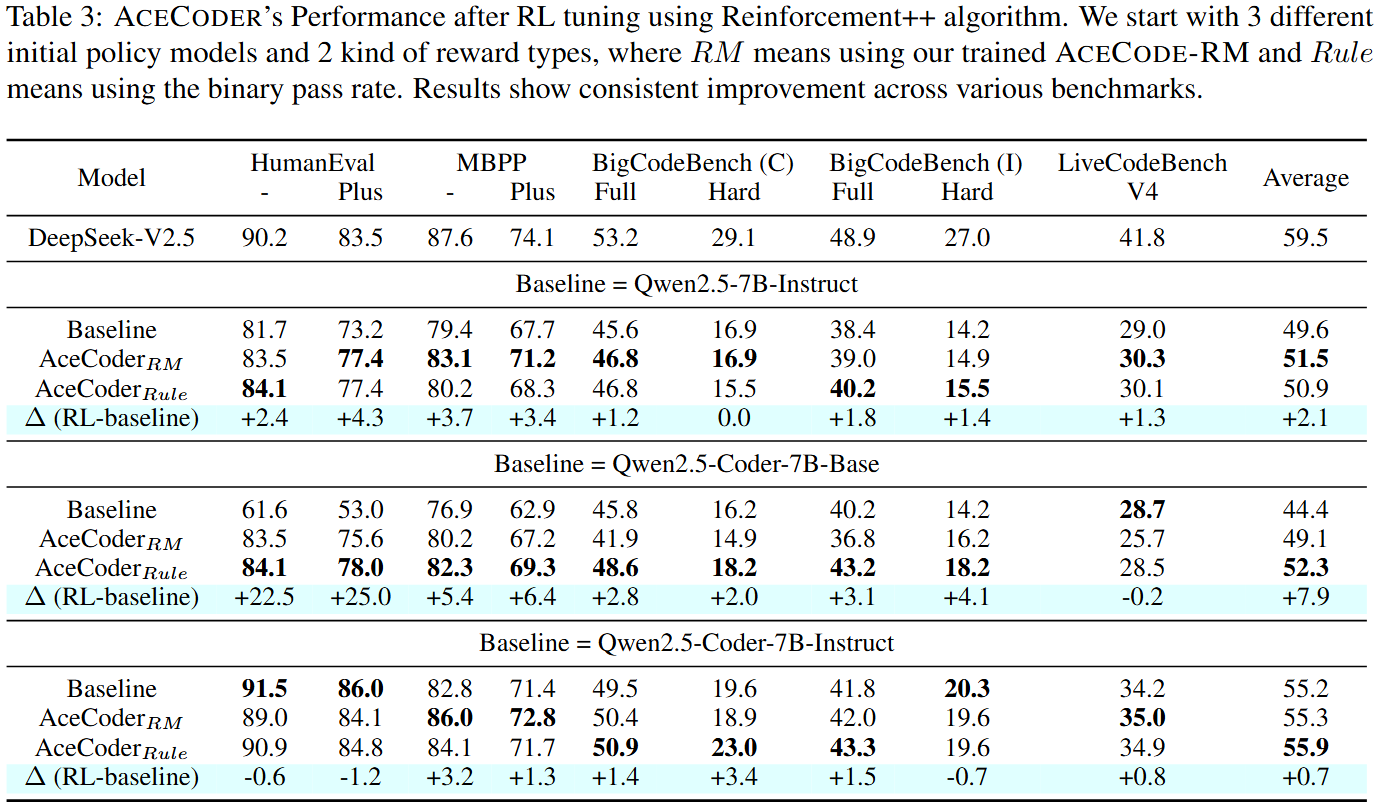

We introduce AceCoder, the first work to propose a fully automated pipeline for synthesizing large-scale reliable tests used for the reward model training and reinforcement learning in the coding scenario. To do this, we curated the dataset AceCode-89K, where we start from a seed code dataset and prompt powerful LLMs to "imagine" proper test cases for the coding question and filter the noisy ones. We sample inferences from existing coder models and compute their pass rate as the reliable and verifiable rewards for both training the reward model and conducting the reinforcement learning for coder LLM.

Note

- This model is trained on the hard version of TIGER-Lab/AceCode-89K with about 22k examples, using the binary pass rate (rule based reward) as the reward

- You can reproduce the hard version of TIGER-Lab/AceCode-89K using script in our Github

- The training takes 6 hours to finish on 8 x H100 GPUs in around 80 optimization steps.

- To reproduce the training, please refer to our training script in the Github

- To use the model, please refer to the codes in Qwen/Qwen2.5-7B-Instruct

- Training wandb link

Usage

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "TIGER-Lab/AceCoder-Qwen2.5-Coder-7B-Ins-Rule"

model = AutoModelForCausalLM.from_pretrained(

model_name,

torch_dtype="auto",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

prompt = "Give me a short introduction to large language model."

messages = [

{"role": "system", "content": "You are Qwen, created by Alibaba Cloud. You are a helpful assistant."},

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

generated_ids = model.generate(

**model_inputs,

max_new_tokens=512

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

Performance

Citation

@article{AceCoder,

title={AceCoder: Acing Coder RL via Automated Test-Case Synthesis},

author={Zeng, Huaye and Jiang, Dongfu and Wang, Haozhe and Nie, Ping and Chen, Xiaotong and Chen, Wenhu},

journal={ArXiv},

year={2025},

volume={abs/2207.01780}

}