End of training

Browse files- README.md +2 -1

- all_results.json +12 -0

- eval_results.json +7 -0

- train_results.json +8 -0

- trainer_state.json +288 -0

- training_eval_loss.png +0 -0

- training_loss.png +0 -0

README.md

CHANGED

|

@@ -4,6 +4,7 @@ license: apache-2.0

|

|

| 4 |

base_model: Qwen/Qwen2.5-7B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

|

|

|

| 7 |

- generated_from_trainer

|

| 8 |

model-index:

|

| 9 |

- name: hp_ablations_qwen_epoch1_dcftv1.2

|

|

@@ -15,7 +16,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 15 |

|

| 16 |

# hp_ablations_qwen_epoch1_dcftv1.2

|

| 17 |

|

| 18 |

-

This model is a fine-tuned version of [Qwen/Qwen2.5-7B](https://huggingface.co/Qwen/Qwen2.5-7B) on

|

| 19 |

It achieves the following results on the evaluation set:

|

| 20 |

- Loss: 0.6407

|

| 21 |

|

|

|

|

| 4 |

base_model: Qwen/Qwen2.5-7B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

| 7 |

+

- full

|

| 8 |

- generated_from_trainer

|

| 9 |

model-index:

|

| 10 |

- name: hp_ablations_qwen_epoch1_dcftv1.2

|

|

|

|

| 16 |

|

| 17 |

# hp_ablations_qwen_epoch1_dcftv1.2

|

| 18 |

|

| 19 |

+

This model is a fine-tuned version of [Qwen/Qwen2.5-7B](https://huggingface.co/Qwen/Qwen2.5-7B) on the mlfoundations-dev/oh-dcft-v1.2_no-curation_gpt-4o-mini dataset.

|

| 20 |

It achieves the following results on the evaluation set:

|

| 21 |

- Loss: 0.6407

|

| 22 |

|

all_results.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 0.9978054133138259,

|

| 3 |

+

"eval_loss": 0.6406828165054321,

|

| 4 |

+

"eval_runtime": 345.7045,

|

| 5 |

+

"eval_samples_per_second": 26.638,

|

| 6 |

+

"eval_steps_per_second": 0.417,

|

| 7 |

+

"total_flos": 714820936531968.0,

|

| 8 |

+

"train_loss": 0.6610767383379671,

|

| 9 |

+

"train_runtime": 18392.4604,

|

| 10 |

+

"train_samples_per_second": 9.512,

|

| 11 |

+

"train_steps_per_second": 0.019

|

| 12 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 0.9978054133138259,

|

| 3 |

+

"eval_loss": 0.6406828165054321,

|

| 4 |

+

"eval_runtime": 345.7045,

|

| 5 |

+

"eval_samples_per_second": 26.638,

|

| 6 |

+

"eval_steps_per_second": 0.417

|

| 7 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 0.9978054133138259,

|

| 3 |

+

"total_flos": 714820936531968.0,

|

| 4 |

+

"train_loss": 0.6610767383379671,

|

| 5 |

+

"train_runtime": 18392.4604,

|

| 6 |

+

"train_samples_per_second": 9.512,

|

| 7 |

+

"train_steps_per_second": 0.019

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

@@ -0,0 +1,288 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"best_metric": null,

|

| 3 |

+

"best_model_checkpoint": null,

|

| 4 |

+

"epoch": 0.9978054133138259,

|

| 5 |

+

"eval_steps": 500,

|

| 6 |

+

"global_step": 341,

|

| 7 |

+

"is_hyper_param_search": false,

|

| 8 |

+

"is_local_process_zero": true,

|

| 9 |

+

"is_world_process_zero": true,

|

| 10 |

+

"log_history": [

|

| 11 |

+

{

|

| 12 |

+

"epoch": 0.029261155815654718,

|

| 13 |

+

"grad_norm": 1.3814841088477259,

|

| 14 |

+

"learning_rate": 5e-06,

|

| 15 |

+

"loss": 0.7983,

|

| 16 |

+

"step": 10

|

| 17 |

+

},

|

| 18 |

+

{

|

| 19 |

+

"epoch": 0.058522311631309436,

|

| 20 |

+

"grad_norm": 1.2887254498462366,

|

| 21 |

+

"learning_rate": 5e-06,

|

| 22 |

+

"loss": 0.7383,

|

| 23 |

+

"step": 20

|

| 24 |

+

},

|

| 25 |

+

{

|

| 26 |

+

"epoch": 0.08778346744696415,

|

| 27 |

+

"grad_norm": 1.2838337638318262,

|

| 28 |

+

"learning_rate": 5e-06,

|

| 29 |

+

"loss": 0.7071,

|

| 30 |

+

"step": 30

|

| 31 |

+

},

|

| 32 |

+

{

|

| 33 |

+

"epoch": 0.11704462326261887,

|

| 34 |

+

"grad_norm": 1.0922966245519412,

|

| 35 |

+

"learning_rate": 5e-06,

|

| 36 |

+

"loss": 0.6966,

|

| 37 |

+

"step": 40

|

| 38 |

+

},

|

| 39 |

+

{

|

| 40 |

+

"epoch": 0.14630577907827358,

|

| 41 |

+

"grad_norm": 1.1509702467959955,

|

| 42 |

+

"learning_rate": 5e-06,

|

| 43 |

+

"loss": 0.6871,

|

| 44 |

+

"step": 50

|

| 45 |

+

},

|

| 46 |

+

{

|

| 47 |

+

"epoch": 0.1755669348939283,

|

| 48 |

+

"grad_norm": 0.9131326378339343,

|

| 49 |

+

"learning_rate": 5e-06,

|

| 50 |

+

"loss": 0.6766,

|

| 51 |

+

"step": 60

|

| 52 |

+

},

|

| 53 |

+

{

|

| 54 |

+

"epoch": 0.20482809070958302,

|

| 55 |

+

"grad_norm": 0.6023605382305695,

|

| 56 |

+

"learning_rate": 5e-06,

|

| 57 |

+

"loss": 0.67,

|

| 58 |

+

"step": 70

|

| 59 |

+

},

|

| 60 |

+

{

|

| 61 |

+

"epoch": 0.23408924652523774,

|

| 62 |

+

"grad_norm": 0.4161189143299673,

|

| 63 |

+

"learning_rate": 5e-06,

|

| 64 |

+

"loss": 0.6647,

|

| 65 |

+

"step": 80

|

| 66 |

+

},

|

| 67 |

+

{

|

| 68 |

+

"epoch": 0.26335040234089246,

|

| 69 |

+

"grad_norm": 0.4292872692725048,

|

| 70 |

+

"learning_rate": 5e-06,

|

| 71 |

+

"loss": 0.662,

|

| 72 |

+

"step": 90

|

| 73 |

+

},

|

| 74 |

+

{

|

| 75 |

+

"epoch": 0.29261155815654716,

|

| 76 |

+

"grad_norm": 0.4514981368981087,

|

| 77 |

+

"learning_rate": 5e-06,

|

| 78 |

+

"loss": 0.6544,

|

| 79 |

+

"step": 100

|

| 80 |

+

},

|

| 81 |

+

{

|

| 82 |

+

"epoch": 0.3218727139722019,

|

| 83 |

+

"grad_norm": 0.42808475020683995,

|

| 84 |

+

"learning_rate": 5e-06,

|

| 85 |

+

"loss": 0.6632,

|

| 86 |

+

"step": 110

|

| 87 |

+

},

|

| 88 |

+

{

|

| 89 |

+

"epoch": 0.3511338697878566,

|

| 90 |

+

"grad_norm": 0.37727288970274103,

|

| 91 |

+

"learning_rate": 5e-06,

|

| 92 |

+

"loss": 0.6681,

|

| 93 |

+

"step": 120

|

| 94 |

+

},

|

| 95 |

+

{

|

| 96 |

+

"epoch": 0.38039502560351135,

|

| 97 |

+

"grad_norm": 0.3822819962955356,

|

| 98 |

+

"learning_rate": 5e-06,

|

| 99 |

+

"loss": 0.6519,

|

| 100 |

+

"step": 130

|

| 101 |

+

},

|

| 102 |

+

{

|

| 103 |

+

"epoch": 0.40965618141916604,

|

| 104 |

+

"grad_norm": 0.36899229568727576,

|

| 105 |

+

"learning_rate": 5e-06,

|

| 106 |

+

"loss": 0.6526,

|

| 107 |

+

"step": 140

|

| 108 |

+

},

|

| 109 |

+

{

|

| 110 |

+

"epoch": 0.4389173372348208,

|

| 111 |

+

"grad_norm": 0.3468922138887062,

|

| 112 |

+

"learning_rate": 5e-06,

|

| 113 |

+

"loss": 0.648,

|

| 114 |

+

"step": 150

|

| 115 |

+

},

|

| 116 |

+

{

|

| 117 |

+

"epoch": 0.4681784930504755,

|

| 118 |

+

"grad_norm": 0.3795489753723712,

|

| 119 |

+

"learning_rate": 5e-06,

|

| 120 |

+

"loss": 0.6499,

|

| 121 |

+

"step": 160

|

| 122 |

+

},

|

| 123 |

+

{

|

| 124 |

+

"epoch": 0.49743964886613024,

|

| 125 |

+

"grad_norm": 0.36716418800909245,

|

| 126 |

+

"learning_rate": 5e-06,

|

| 127 |

+

"loss": 0.655,

|

| 128 |

+

"step": 170

|

| 129 |

+

},

|

| 130 |

+

{

|

| 131 |

+

"epoch": 0.5267008046817849,

|

| 132 |

+

"grad_norm": 0.33393389585281086,

|

| 133 |

+

"learning_rate": 5e-06,

|

| 134 |

+

"loss": 0.6546,

|

| 135 |

+

"step": 180

|

| 136 |

+

},

|

| 137 |

+

{

|

| 138 |

+

"epoch": 0.5559619604974396,

|

| 139 |

+

"grad_norm": 0.3581250031024453,

|

| 140 |

+

"learning_rate": 5e-06,

|

| 141 |

+

"loss": 0.6431,

|

| 142 |

+

"step": 190

|

| 143 |

+

},

|

| 144 |

+

{

|

| 145 |

+

"epoch": 0.5852231163130943,

|

| 146 |

+

"grad_norm": 0.34112480920310834,

|

| 147 |

+

"learning_rate": 5e-06,

|

| 148 |

+

"loss": 0.6443,

|

| 149 |

+

"step": 200

|

| 150 |

+

},

|

| 151 |

+

{

|

| 152 |

+

"epoch": 0.6144842721287491,

|

| 153 |

+

"grad_norm": 0.3396720319535308,

|

| 154 |

+

"learning_rate": 5e-06,

|

| 155 |

+

"loss": 0.6509,

|

| 156 |

+

"step": 210

|

| 157 |

+

},

|

| 158 |

+

{

|

| 159 |

+

"epoch": 0.6437454279444038,

|

| 160 |

+

"grad_norm": 0.354125295809357,

|

| 161 |

+

"learning_rate": 5e-06,

|

| 162 |

+

"loss": 0.6389,

|

| 163 |

+

"step": 220

|

| 164 |

+

},

|

| 165 |

+

{

|

| 166 |

+

"epoch": 0.6730065837600585,

|

| 167 |

+

"grad_norm": 0.3606633109240175,

|

| 168 |

+

"learning_rate": 5e-06,

|

| 169 |

+

"loss": 0.6388,

|

| 170 |

+

"step": 230

|

| 171 |

+

},

|

| 172 |

+

{

|

| 173 |

+

"epoch": 0.7022677395757132,

|

| 174 |

+

"grad_norm": 0.3251299701359152,

|

| 175 |

+

"learning_rate": 5e-06,

|

| 176 |

+

"loss": 0.6457,

|

| 177 |

+

"step": 240

|

| 178 |

+

},

|

| 179 |

+

{

|

| 180 |

+

"epoch": 0.731528895391368,

|

| 181 |

+

"grad_norm": 0.33939812328375596,

|

| 182 |

+

"learning_rate": 5e-06,

|

| 183 |

+

"loss": 0.6441,

|

| 184 |

+

"step": 250

|

| 185 |

+

},

|

| 186 |

+

{

|

| 187 |

+

"epoch": 0.7607900512070227,

|

| 188 |

+

"grad_norm": 0.3366111360440969,

|

| 189 |

+

"learning_rate": 5e-06,

|

| 190 |

+

"loss": 0.6467,

|

| 191 |

+

"step": 260

|

| 192 |

+

},

|

| 193 |

+

{

|

| 194 |

+

"epoch": 0.7900512070226774,

|

| 195 |

+

"grad_norm": 0.37753830325225896,

|

| 196 |

+

"learning_rate": 5e-06,

|

| 197 |

+

"loss": 0.649,

|

| 198 |

+

"step": 270

|

| 199 |

+

},

|

| 200 |

+

{

|

| 201 |

+

"epoch": 0.8193123628383321,

|

| 202 |

+

"grad_norm": 0.34478553790521904,

|

| 203 |

+

"learning_rate": 5e-06,

|

| 204 |

+

"loss": 0.6477,

|

| 205 |

+

"step": 280

|

| 206 |

+

},

|

| 207 |

+

{

|

| 208 |

+

"epoch": 0.8485735186539868,

|

| 209 |

+

"grad_norm": 0.33926125432670784,

|

| 210 |

+

"learning_rate": 5e-06,

|

| 211 |

+

"loss": 0.64,

|

| 212 |

+

"step": 290

|

| 213 |

+

},

|

| 214 |

+

{

|

| 215 |

+

"epoch": 0.8778346744696416,

|

| 216 |

+

"grad_norm": 0.3502233770134374,

|

| 217 |

+

"learning_rate": 5e-06,

|

| 218 |

+

"loss": 0.6318,

|

| 219 |

+

"step": 300

|

| 220 |

+

},

|

| 221 |

+

{

|

| 222 |

+

"epoch": 0.9070958302852963,

|

| 223 |

+

"grad_norm": 0.34993547260225705,

|

| 224 |

+

"learning_rate": 5e-06,

|

| 225 |

+

"loss": 0.6449,

|

| 226 |

+

"step": 310

|

| 227 |

+

},

|

| 228 |

+

{

|

| 229 |

+

"epoch": 0.936356986100951,

|

| 230 |

+

"grad_norm": 0.3358780908708201,

|

| 231 |

+

"learning_rate": 5e-06,

|

| 232 |

+

"loss": 0.6369,

|

| 233 |

+

"step": 320

|

| 234 |

+

},

|

| 235 |

+

{

|

| 236 |

+

"epoch": 0.9656181419166057,

|

| 237 |

+

"grad_norm": 0.37650022189557825,

|

| 238 |

+

"learning_rate": 5e-06,

|

| 239 |

+

"loss": 0.6402,

|

| 240 |

+

"step": 330

|

| 241 |

+

},

|

| 242 |

+

{

|

| 243 |

+

"epoch": 0.9948792977322605,

|

| 244 |

+

"grad_norm": 0.3494056493360394,

|

| 245 |

+

"learning_rate": 5e-06,

|

| 246 |

+

"loss": 0.6362,

|

| 247 |

+

"step": 340

|

| 248 |

+

},

|

| 249 |

+

{

|

| 250 |

+

"epoch": 0.9978054133138259,

|

| 251 |

+

"eval_loss": 0.6406828165054321,

|

| 252 |

+

"eval_runtime": 343.5643,

|

| 253 |

+

"eval_samples_per_second": 26.804,

|

| 254 |

+

"eval_steps_per_second": 0.419,

|

| 255 |

+

"step": 341

|

| 256 |

+

},

|

| 257 |

+

{

|

| 258 |

+

"epoch": 0.9978054133138259,

|

| 259 |

+

"step": 341,

|

| 260 |

+

"total_flos": 714820936531968.0,

|

| 261 |

+

"train_loss": 0.6610767383379671,

|

| 262 |

+

"train_runtime": 18392.4604,

|

| 263 |

+

"train_samples_per_second": 9.512,

|

| 264 |

+

"train_steps_per_second": 0.019

|

| 265 |

+

}

|

| 266 |

+

],

|

| 267 |

+

"logging_steps": 10,

|

| 268 |

+

"max_steps": 341,

|

| 269 |

+

"num_input_tokens_seen": 0,

|

| 270 |

+

"num_train_epochs": 1,

|

| 271 |

+

"save_steps": 500,

|

| 272 |

+

"stateful_callbacks": {

|

| 273 |

+

"TrainerControl": {

|

| 274 |

+

"args": {

|

| 275 |

+

"should_epoch_stop": false,

|

| 276 |

+

"should_evaluate": false,

|

| 277 |

+

"should_log": false,

|

| 278 |

+

"should_save": true,

|

| 279 |

+

"should_training_stop": true

|

| 280 |

+

},

|

| 281 |

+

"attributes": {}

|

| 282 |

+

}

|

| 283 |

+

},

|

| 284 |

+

"total_flos": 714820936531968.0,

|

| 285 |

+

"train_batch_size": 8,

|

| 286 |

+

"trial_name": null,

|

| 287 |

+

"trial_params": null

|

| 288 |

+

}

|

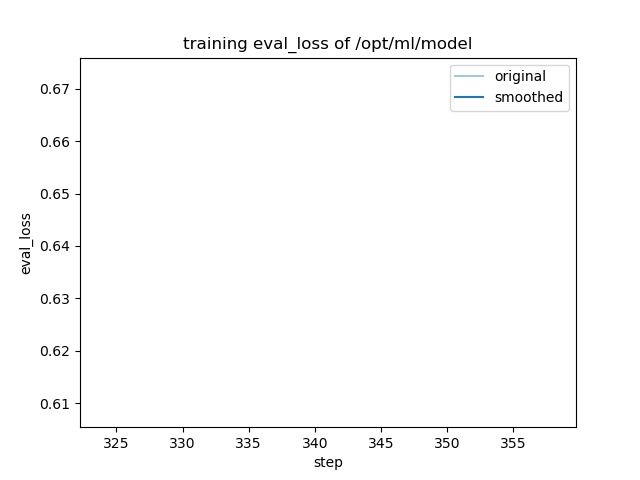

training_eval_loss.png

ADDED

|

training_loss.png

ADDED

|