Upload folder using huggingface_hub (#2)

Browse files- 1908e225640895305f2166e92906b76cb5e1577d35c2096ec0fb015450d2a60c (5a124f50e12ef75bd986ba36489193aa8ed541bd)

- config.json +1 -1

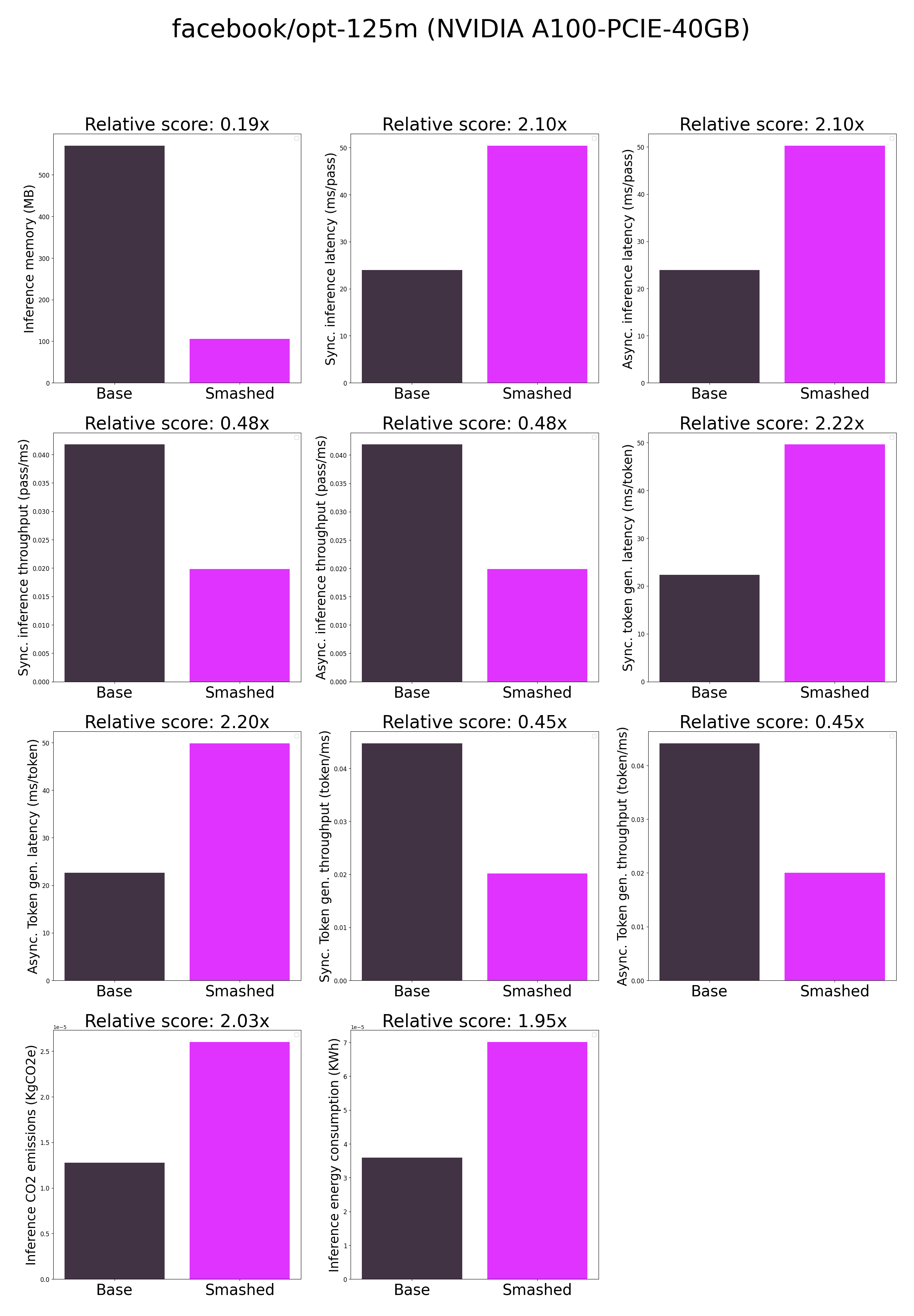

- plots.png +0 -0

- results.json +34 -0

- smash_config.json +6 -6

config.json

CHANGED

|

@@ -24,7 +24,7 @@

|

|

| 24 |

"pad_token_id": 1,

|

| 25 |

"prefix": "</s>",

|

| 26 |

"torch_dtype": "float16",

|

| 27 |

-

"transformers_version": "4.

|

| 28 |

"use_cache": true,

|

| 29 |

"vocab_size": 50272,

|

| 30 |

"word_embed_proj_dim": 768

|

|

|

|

| 24 |

"pad_token_id": 1,

|

| 25 |

"prefix": "</s>",

|

| 26 |

"torch_dtype": "float16",

|

| 27 |

+

"transformers_version": "4.40.0",

|

| 28 |

"use_cache": true,

|

| 29 |

"vocab_size": 50272,

|

| 30 |

"word_embed_proj_dim": 768

|

plots.png

CHANGED

|

|

results.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"base_current_gpu_type": "NVIDIA A100-PCIE-40GB",

|

| 3 |

+

"base_current_gpu_total_memory": 40339.3125,

|

| 4 |

+

"base_memory_inference_first": 690.0,

|

| 5 |

+

"base_memory_inference": 570.0,

|

| 6 |

+

"base_token_generation_latency_sync": 25.232858657836914,

|

| 7 |

+

"base_token_generation_latency_async": 25.168074667453766,

|

| 8 |

+

"base_token_generation_throughput_sync": 0.03963086440423651,

|

| 9 |

+

"base_token_generation_throughput_async": 0.03973287640048031,

|

| 10 |

+

"base_token_generation_CO2_emissions": 6.916667409165086e-06,

|

| 11 |

+

"base_token_generation_energy_consumption": 0.001975681904854854,

|

| 12 |

+

"base_inference_latency_sync": 25.73680648803711,

|

| 13 |

+

"base_inference_latency_async": 25.754165649414062,

|

| 14 |

+

"base_inference_throughput_sync": 0.03885485949722692,

|

| 15 |

+

"base_inference_throughput_async": 0.03882867003391939,

|

| 16 |

+

"base_inference_CO2_emissions": 8.20508156037289e-06,

|

| 17 |

+

"base_inference_energy_consumption": 1.885578995579329e-05,

|

| 18 |

+

"smashed_current_gpu_type": "NVIDIA A100-PCIE-40GB",

|

| 19 |

+

"smashed_current_gpu_total_memory": 40339.3125,

|

| 20 |

+

"smashed_memory_inference_first": 104.0,

|

| 21 |

+

"smashed_memory_inference": 106.0,

|

| 22 |

+

"smashed_token_generation_latency_sync": 53.81842727661133,

|

| 23 |

+

"smashed_token_generation_latency_async": 53.83266881108284,

|

| 24 |

+

"smashed_token_generation_throughput_sync": 0.01858099633533113,

|

| 25 |

+

"smashed_token_generation_throughput_async": 0.018576080697565642,

|

| 26 |

+

"smashed_token_generation_CO2_emissions": 1.4030177588319765e-05,

|

| 27 |

+

"smashed_token_generation_energy_consumption": 0.004235501136593081,

|

| 28 |

+

"smashed_inference_latency_sync": 53.602509307861325,

|

| 29 |

+

"smashed_inference_latency_async": 53.591203689575195,

|

| 30 |

+

"smashed_inference_throughput_sync": 0.018655843036313607,

|

| 31 |

+

"smashed_inference_throughput_async": 0.018659778679211203,

|

| 32 |

+

"smashed_inference_CO2_emissions": 1.382929576343033e-05,

|

| 33 |

+

"smashed_inference_energy_consumption": 3.627959529056468e-05

|

| 34 |

+

}

|

smash_config.json

CHANGED

|

@@ -2,23 +2,23 @@

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

-

"pruners": "

|

| 6 |

"pruning_ratio": 0.0,

|

| 7 |

-

"factorizers": "

|

| 8 |

"quantizers": "['hqq']",

|

| 9 |

"weight_quantization_bits": 2,

|

| 10 |

-

"output_deviation": 0.

|

| 11 |

-

"compilers": "

|

| 12 |

"static_batch": true,

|

| 13 |

"static_shape": true,

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 18 |

"batch_size": 1,

|

| 19 |

"tokenizer": "GPT2TokenizerFast(name_or_path='facebook/opt-125m', vocab_size=50265, model_max_length=1000000000000000019884624838656, is_fast=True, padding_side='right', truncation_side='right', special_tokens={'bos_token': '</s>', 'eos_token': '</s>', 'unk_token': '</s>', 'pad_token': '<pad>'}, clean_up_tokenization_spaces=True), added_tokens_decoder={\n\t1: AddedToken(\"<pad>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|

| 20 |

"task": "text_text_generation",

|

| 21 |

-

"model_config": "{'return_dict': True, 'output_hidden_states': False, 'output_attentions': False, 'torchscript': False, 'torch_dtype': 'float16', 'use_bfloat16': False, 'tf_legacy_loss': False, 'pruned_heads': {}, 'tie_word_embeddings': True, 'chunk_size_feed_forward': 0, 'is_encoder_decoder': False, 'is_decoder': False, 'cross_attention_hidden_size': None, 'add_cross_attention': False, 'tie_encoder_decoder': False, 'max_length': 20, 'min_length': 0, 'do_sample': False, 'early_stopping': False, 'num_beams': 1, 'num_beam_groups': 1, 'diversity_penalty': 0.0, 'temperature': 1.0, 'top_k': 50, 'top_p': 1.0, 'typical_p': 1.0, 'repetition_penalty': 1.0, 'length_penalty': 1.0, 'no_repeat_ngram_size': 0, 'encoder_no_repeat_ngram_size': 0, 'bad_words_ids': None, 'num_return_sequences': 1, 'output_scores': False, 'return_dict_in_generate': False, 'forced_bos_token_id': None, 'forced_eos_token_id': None, 'remove_invalid_values': False, 'exponential_decay_length_penalty': None, 'suppress_tokens': None, 'begin_suppress_tokens': None, 'architectures': ['OPTForCausalLM'], 'finetuning_task': None, 'id2label': {0: 'LABEL_0', 1: 'LABEL_1'}, 'label2id': {'LABEL_0': 0, 'LABEL_1': 1}, 'tokenizer_class': None, 'prefix': '</s>', 'bos_token_id': 2, 'pad_token_id': 1, 'eos_token_id': 2, 'sep_token_id': None, 'decoder_start_token_id': None, 'task_specific_params': None, 'problem_type': None, '_name_or_path': 'facebook/opt-125m', 'transformers_version': '4.

|

| 22 |

"model_name": "facebook/opt-125m",

|

| 23 |

"max_batch_size": 1,

|

| 24 |

"qtype_weight": "torch.qint8",

|

|

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

+

"pruners": "[]",

|

| 6 |

"pruning_ratio": 0.0,

|

| 7 |

+

"factorizers": "[]",

|

| 8 |

"quantizers": "['hqq']",

|

| 9 |

"weight_quantization_bits": 2,

|

| 10 |

+

"output_deviation": 0.01,

|

| 11 |

+

"compilers": "[]",

|

| 12 |

"static_batch": true,

|

| 13 |

"static_shape": true,

|

| 14 |

"controlnet": "None",

|

| 15 |

"unet_dim": 4,

|

| 16 |

"device": "cuda",

|

| 17 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsmj5uzwwc",

|

| 18 |

"batch_size": 1,

|

| 19 |

"tokenizer": "GPT2TokenizerFast(name_or_path='facebook/opt-125m', vocab_size=50265, model_max_length=1000000000000000019884624838656, is_fast=True, padding_side='right', truncation_side='right', special_tokens={'bos_token': '</s>', 'eos_token': '</s>', 'unk_token': '</s>', 'pad_token': '<pad>'}, clean_up_tokenization_spaces=True), added_tokens_decoder={\n\t1: AddedToken(\"<pad>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n\t2: AddedToken(\"</s>\", rstrip=False, lstrip=False, single_word=False, normalized=True, special=True),\n}",

|

| 20 |

"task": "text_text_generation",

|

| 21 |

+

"model_config": "{'return_dict': True, 'output_hidden_states': False, 'output_attentions': False, 'torchscript': False, 'torch_dtype': 'float16', 'use_bfloat16': False, 'tf_legacy_loss': False, 'pruned_heads': {}, 'tie_word_embeddings': True, 'chunk_size_feed_forward': 0, 'is_encoder_decoder': False, 'is_decoder': False, 'cross_attention_hidden_size': None, 'add_cross_attention': False, 'tie_encoder_decoder': False, 'max_length': 20, 'min_length': 0, 'do_sample': False, 'early_stopping': False, 'num_beams': 1, 'num_beam_groups': 1, 'diversity_penalty': 0.0, 'temperature': 1.0, 'top_k': 50, 'top_p': 1.0, 'typical_p': 1.0, 'repetition_penalty': 1.0, 'length_penalty': 1.0, 'no_repeat_ngram_size': 0, 'encoder_no_repeat_ngram_size': 0, 'bad_words_ids': None, 'num_return_sequences': 1, 'output_scores': False, 'return_dict_in_generate': False, 'forced_bos_token_id': None, 'forced_eos_token_id': None, 'remove_invalid_values': False, 'exponential_decay_length_penalty': None, 'suppress_tokens': None, 'begin_suppress_tokens': None, 'architectures': ['OPTForCausalLM'], 'finetuning_task': None, 'id2label': {0: 'LABEL_0', 1: 'LABEL_1'}, 'label2id': {'LABEL_0': 0, 'LABEL_1': 1}, 'tokenizer_class': None, 'prefix': '</s>', 'bos_token_id': 2, 'pad_token_id': 1, 'eos_token_id': 2, 'sep_token_id': None, 'decoder_start_token_id': None, 'task_specific_params': None, 'problem_type': None, '_name_or_path': 'facebook/opt-125m', 'transformers_version': '4.40.0', 'activation_dropout': 0.0, 'model_type': 'opt', 'vocab_size': 50272, 'max_position_embeddings': 2048, 'num_attention_heads': 12, 'word_embed_proj_dim': 768, 'ffn_dim': 3072, 'hidden_size': 768, 'num_hidden_layers': 12, 'dropout': 0.1, 'attention_dropout': 0.0, 'activation_function': 'relu', 'init_std': 0.02, 'layerdrop': 0.0, 'use_cache': True, 'do_layer_norm_before': True, 'enable_bias': True, 'layer_norm_elementwise_affine': True, '_remove_final_layer_norm': False}",

|

| 22 |

"model_name": "facebook/opt-125m",

|

| 23 |

"max_batch_size": 1,

|

| 24 |

"qtype_weight": "torch.qint8",

|